Are You Actually Progressing? Cross-Activity Interval Comparison Using the API

Hey everyone,

I’ve been experimenting with something that I think could benefit a lot of us, and I wanted to share the approach + results before making a proper feature request.

The Question

We all do recurring structured workouts — SweetSpot blocks, VO2max intervals, cadence drills. But how do you objectively answer: “Am I getting better at this specific effort over the past 8 weeks?”

Right now, you’d have to open each activity individually, find the matching interval, write down the numbers, and compare manually. Intervals.icu is amazing at per-activity analysis, but there’s no way to compare the same labeled interval across multiple sessions.

What I Did

Using Python + the Intervals.icu API, I built a simple workflow:

- Label intervals consistently across recurring workouts (e.g., “Set1 95rpm”, “Set2 SweetSpot”) — either at recording time or retroactively via

PUT /api/v1/activity/{id} - Query matching intervals across all activities of the same type

- Compute trends automatically (power, HR, decoupling, cadence delta)

That’s it. The magic is in the consistent labeling.

What It Revealed

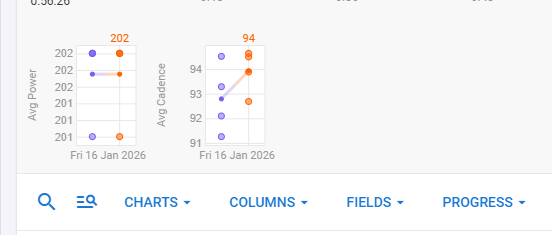

SweetSpot blocks over 8 weeks (6 sessions):

- Power: 195W → 201W (+3.2%) with HR basically flat (+2 bpm)

- Decoupling: 4.48% → 3.36% (−25%) — aerobic efficiency improving

- Min watts: 106W → 148W (+40%) — holding target much more consistently

Cadence drills — “95rpm” blocks over 8 weeks (5 sessions):

- Actual cadence: 79.9 rpm → 94.1 rpm — took 8 weeks to finally hit the target!

- Power: 150W → 156W (+4%) at same HR — neuromuscular adaptation confirmed

These trends were invisible when looking at activities one by one. Seeing them lined up chronologically made the progression (and the problem areas) immediately obvious.

Where It Doesn’t Work

Pure endurance rides — no internal structure to compare, prescriptions vary by design, outdoor terrain adds noise. For those, whole-activity metrics (Pw:HR ratio, global decoupling) remain more useful.

The Feature Request

I think this could be incredibly powerful as a native feature:

- “Compare Across Activities” — select a workout name pattern, pick a label, see a trend chart at interval granularity

- Label templates in workouts — define semantic labels (“Set1 SweetSpot”, “Recovery”) in the workout builder that auto-apply on recording

- Interval-level progression charts — a mini fitness chart scoped to a specific block type

For the Dev-Curious

Retroactive labeling works perfectly via the API — the stream data is already there, you’re just telling Intervals.icu how to slice it. Happy to share code snippets and more detailed methodology if there’s interest.

Has anyone else tried systematic interval comparison across workouts? Curious to hear other approaches, and whether this kind of feature would be useful to you.

Context: indoor trainer, FTP ~220W, structured training since mid-2023